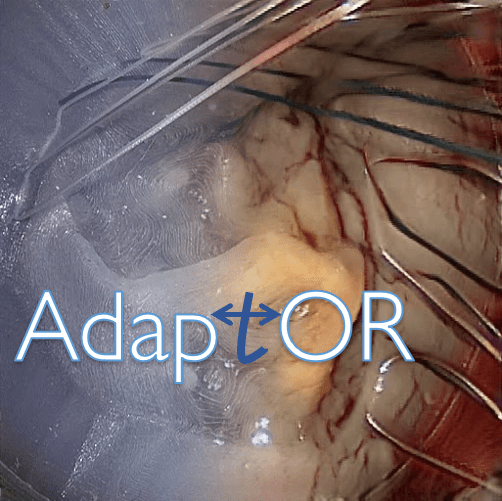

AdaptOR: Deep Image Generation Model Challenge in Surgery

++ AdaptOR 2022 challenge is closed and the datasets are no longer available through this platform ++

++ Important announcement on the released training data (Updated June 2nd, 2022) ++

A small number of the sub-folders (7 out of 64) have the images of the left and right camera switched. This

issue has now been fixed on synapse. Please run the download_files.py script on synapse again - this will download

and replace only the changed files onto your system.

News

- April 15th, 2022 - The AdaptOR challenge 2022 is now open! Please follow our steps for registration, and also check out details about the submission.

- April 1st, 2022 - The AdaptOR challenge will be associated to the DGM4MICCAI workshop 2022!

- March 13th, 2022 - The 2nd Edition of the AdaptOR challenge will be held in conjunction with MICCAI 2022!

Timeline

Next upcoming important dates:

- July 01st, 2022 - Platform testing phase opens

- July 15th, 2022 - Submission system opens

View the complete timeline here.

Challenge Abstract

The continuing AdaptOR Challenge aims to spark methodological developments in deep image generation models for the surgical domain. In this year’s edition, we again focus on video-assisted mitral valve repair [1], which is becoming the novel state-of-the art [2]. Especially the usage of 3D endoscopes, where data is captured with a left and right camera and presented on a 3D-compatible monitor, has been proven very beneficial due to increased depth perception. Moreover, it is possible when post processing to perform quantitative analyses by exploiting the stereo relation.

Towards this end, and building upon the challenge in the previous year [7], the AdaptOR Challenge 2022 proposes a task of novel view synthesis for endoscopic data. During training, the participants are provided the left and the right stereo camera images, and the test time task is to predict the corresponding image for a given image from the left camera. This would serve the purposes of facilitating 3D perception when only 2D data is available and of providing relevant information for depth reconstruction, as novel view synthesis is an integral subtask of numerous existing cutting edge depth estimation methods [5,6].

Intraoperative data sets in this challenge have varying camera angles, illumination, field of views and occlusions from tissues, tubes, and increased light reflections from surgical headlights. Especially demanding in these scenes is the view-dependent appearance of objects that are directly in front of the camera (e.g., sutures). They render it difficult to train models that faithfully predict the missing image from the image pair or to define the correct correspondences between associated pixels. Therefore, the proposed task of novel view synthesis is difficult to solve.

To enhance the training split with data from a related domain, we additionally provide stereo frames from a mitral valve surgical simulator. These frames have comparably stable illumination (less varying reflections) and a more standardized view angle. Participants are invited to include a domain adaptation approach (e.g.,[3],[4],[8]) into their solution.

The dataset this year is an extension of our dataset we released in the previous year, where we now consider more surgeries and additional phases of mitral valve repair to significantly increase the sizes of the single splits at higher resolutions.

Challenge Document

Keywords

References

[2] Casselman Filip P., Van Slycke Sam, Wellens Francis, De Geest Raphael, Degrieck Ivan,Van Praet Frank, Vermeulen Yvette, Vanermen Hugo: Mitral Valve Surgery Can Now Routinely Be Performed Endoscopically. Circulation 108 (10 suppl 1), II–48 (2003).

[3] Sharan, L., Romano, G., Koehler, S., Karck, M., De Simone, R., Engelhardt, S., Mutually improved endoscopic image synthesis and landmark detection in unpaired image-to-image translation. IEEE Journal of Biomedical and Health Informatics, 26(1), 2022, Pages 127 – 138, 10.1109/JBHI.2021.3099858. https://arxiv.org/abs/2107.06941

[4] Engelhardt, S., Sharan, L., Karck, M., De Simone, R., Wolf, I., Cross-Domain Conditional Generative Adversarial Networks for Stereoscopic Hyperrealism in Surgical Training. In: Shen D. et al. (eds) Medical Image Computing and Computer Assisted Intervention – MICCAI 2019. MICCAI 2019. Lecture Notes in Computer Science, vol 11768. Springer, Cham, pp 155-163, doi: https://doi.org/10.1007/978-3-030-32254-0_18, https://arxiv.org/abs/1906.10011

[5] Watson, J., Mac Aodha, O., Turmukhambetov, D., Brostow, G. J., & Firman, M. (2020). Learning Stereo from Single Images. ArXiv:2008.01484 [Cs]. http://arxiv.org/abs/2008.01484

[6] Hou, Y., Solin, A., & Kannala, J. (2021). Novel View Synthesis via Depth-guided Skip Connections. ArXiv:2101.01619 [Cs]. http://arxiv.org/abs/2101.01619

[7] https://adaptor2021.github.io/ doi: 10.5281/zenodo.4646979

[8] Engelhardt S., De Simone R., Full P.M., Karck M., Wolf I. (2018) Improving Surgical Training Phantoms by Hyperrealism: Deep Unpaired Image-to-Image Translation from Real Surgeries. In: Frangi A., Schnabel J., Davatzikos C., Alberola-López C., Fichtinger G. (eds) Medical Image Computing and Computer Assisted Intervention – MICCAI 2018. MICCAI 2018. Lecture Notes in Computer Science, vol 11070. Springer, Cham, doi: 10.1007/978-3-030-00928-1_84

Rules

- No additional training data and no models pre-trained on other datasets are allowed.

- Each team is only allowed to register once and all submissions must be done from the same account.

- A single participant is only allowed to be part of one team.

- The participants agree to participate in a challenge publication that will be planned by the organisers once a sufficient number of submissions are received.

Prizes

Details about potential prizes will be announced in the future. Certificates will be provided for the top 3 performing teams.

Potential Future Plans

Our goal is to extend the dataset with additional cases and to establish a recurring AdaptOR event to support progress in this application field. The 1st edition of AdaptOR was held at MICCAI 2021.